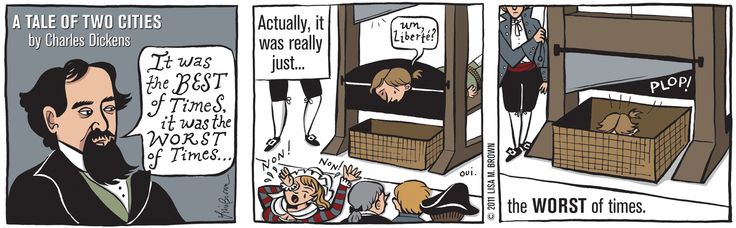

One of the most important aspects of leadership is changing the status quo. Things are never perfect and thus can always improve, and the only way to do that is to make changes. But doing so is tricky, because there are only a few ways it could go:

| Implemented optimally | Implemented poorly | |

| The right change | Great! | Probably bad |

| The wrong change | Bad | Eek! |

There are a couple of other important dimensions to making a change — such as its urgency and impact — but it wouldn’t change the point that there are a lot of ways to screw up the introduction of a change, and a pretty narrow set of ways to do it well. This is absolutely one of the cases in which the road to hell is paved with good intentions. Further below are my general principles to implementing beneficial changes in optimal ways but first, a few caveats:

- Not uncommonly, changes come from outside our span of control. Be it from a new law or regulation, to a change in strategy from the CEO, to rippling effects from the latest financial models, to your best friend Dave who manages another team letting you know your team will have to spend 2 weeks refactoring their code because they need to swap out a library. Because of that, an equally important skill — alongside willingly introducing changes under your control — is managing change imposed by others. Both in terms of material effects to the roadmap, though also in terms of morale. But that’s another article.

- No one gets it right anywhere near 100% of the time. Not only because it’s hard to even please most of the people most of the time, but also because organizations are what Cedric Chin calls complex adaptive systems, which are incredibly difficult to model because we as leaders can never hope to have enough visibility into the motivations, biases, etc, etc, understanding, or many times even the response from any given person. (And if you’re interested in an in-depth look at introducing organizational changes — org design, in Chin’s words — definitely read his article The Skill of Org Design.)

- Each situation is unique, and there’s no generalizing change management. There are impactful changes and changes no one cares about. There are short-term and long-term changes. Ones that affect a lot of people and ones that don’t. Simple and complex. Clear and unclear effects. And on and on. The principles below, I’ve found to be widely applicable — though certainly not universal. However, I always consider them before occasionally sidestepping them.

- Your Values May Vary. I like building consensus when possible, I like engagement and participation, I like transparency, and I like debate. I think those things are fundamental to a great culture, a great working environment, and positive morale, all of which are important because happy employees are more productive. But some issues are divisive and there’s no consensus to be had, some people just don’t care about some things, sometimes we can’t show our cards, and endless debate is a drain on productivity. And while I agree that there’s a time for debate and a time for action, if you tend to index more on the latter set, you might not agree with the below.

And now, the list of 6 Simple Things to Make All Changes a Breeze. Kidding. There’s only 5.

Do as much homework & due diligence as practical

I believe in big design up front (though not waterfall) because efficiency is important to me. My time in the opera only cemented that:

[The operatic development process] should all be very familiar, but how does that differ from the process in most software shops? From my experience, it’s the beginning and the end: the design and the testing. Most shops focus on the middle: the development itself, with testing being a necessary evil, and design often being a quick drawing with some boxes and arrows, maybe just in someone’s head.

Software & Opera, Part 3: Design & Test

In programming, CI/CD, automated testing, and auto-updates make design defects less painful. But with organizational changes, it’s usually a lot harder to fix a defective change, or roll it back. So thinking about it thoroughly before deploying it — i.e., designing it well — is even more important.

A good design depends on a great understanding of the area in question, so it’s important to find out as much as time allows: to talk to the people with opinions, read what others have done, what the best practices are, and understand what happened in similar situations, and why. Just because something worked for Netflix, doesn’t mean it’ll work for my team. The more I can refine my mental model of possible changes and how each option might affect this particular group of people, the more likely I’ll be to pick the optimal option, and the more successful the rollout is going to be. Ergo, this first step is worth spending as much time on as I need to feel comfortable about that mental model.

Shop and iterate the concept

Once I have something I think is good, I write it down. This is a draft of the written change and it’ll at least be referenced later by the “official” announcement, if not be included with it. Writing it down allows me to read it from the view point of specific other people and better gauge what I think their reactions will be: to specific phrasings, to the tone of the whole, and to the intent of the document.

I then distill that into an elevator pitch, and casually drop it in conversation with some people I know will have thoughts. Maybe during a 1:1, or while waiting for a meeting to start, or whatever. “Hey Jane, I’ve been thinking about that problem and am curious what you’d think if we …”. Gauging that initial reaction is important. Is it positive? If not, is it an issue with how I said it, or a problem with the actual change? Why?

I go back and refine. Not everyone will like the change, but it’s important that I think this is still the best change, in spite of specific criticism, and that the right message is getting across — especially to the people that disagree with it. And if most people disagree with the change but I’m still convinced it’s the right one, I take a look at the messaging. What piece are they missing, or misunderstanding, or seeing differently? Sometimes it just comes down to differing values, in which case there’s little to be done but agreeing to disagree.

I then start showing people the written doc — again, live. The interested and affected parties first, then the remaining powers that be. Are they parsing the writing as intended? What helpful suggestions do they have? Is all the relevant context available to them?

Over-communicate

Once I’ve gotten input from as many interested, affected, or responsible people that I can — ideally all of them, but at least a significant sampling for larger changes — I share it out more widely, though informally, as a request-for-comments, probably in a relevant Slack channel: “We’re thinking about making a change to the thing to improve this stuff. Please share your thoughts!”.

By now, I should have no surprises to the reactions. Hopefully my doc addresses the criticisms I’ve heard and thought of, though some people will still bring them to me — either because they think they can change my mind through novel arguments, or by adding to what they are sure is an enormous chorus of opposition, or maybe just because they didn’t read the doc carefully. WET comms are important in helping to avoid that last one, especially with larger groups: different people need the same thing communicated to them in different ways.

In this step, I try to engage with the bigger discussion as much as a I can, but I’m more on the lookout for what I’ve missed earlier. The bee no one’s seen flying around. And if I see one, I evaluate the impact: how much does this change? If I’ve done my homework in step 1, it shouldn’t be fundamental. Hopefully I won’t have to go back to the core group I started with. But, better to do that than push The Wrong Change forward. Humility is important here. It’s easy to get attached to a bad idea because “no way it can be that wrong after all the thought and work I’ve put into it so far!”.

Be the glass you want to see through in the world

I value transparency, so I try to be as transparent as possible. This is not always possible, of course. But it’s possible more often than not. So in my document, I try to explain context, rationale, alternatives not chosen, and anything else that might be useful — not only to the curious bystander now, but to the next person who has my role. Or to my next boss. Or to the awesome engineer that starts next year. Or me in 3 years, having forgotten all the details of how this all went down.

Why was this decision taken? This particular one. Is it still valid under the current conditions, or should we do something else? Having everything written in the record can help dramatically both now and later.

Rush only when necessary

Sometimes change has to happen quickly. The monolith is on fire every other day. My best engineers are thinking about leaving. A re-org is coming next week. But most changes are not that. The new set of Priority fields in JIRA can be rolled out when they’re good and ready. Yes, they should improve reporting, but it’s more important to get it right, because we’re not changing them again this year. Probably not next year either.

It is important to keep making progress and not let proposed and socialized changes languish because “I’ve been so swamped this quarter”, but as long as useful activity is happening, it shouldn’t be rushed unless it needs to be. And sometimes change is urgent and so skipping through some of the above principles, or abbreviating them, is needed.

But when its not, and news of the change spread through the grapevine and people are clamoring for it, take that as good news! It’s useful to update them on the status, but the great part is that they want the change, which makes the overcommunication aspect way easier. And of course it adds some time pressure with the masses waiting with bated breath, but it’s still important to not get too eager, because again: it’s often hard to roll organizational changes back. Even if it’s just a matter of sending an email and no one has to even remember to change their workflows again, it’s still trust in your insight as a leader that gets lost. Political capital.

As leaders, the more of a track record we have of making thoughtful, positive changes, the easier it is to get consensus and make subsequent changes. It’s a feedback loop that’s driven — like a lot of things — by care and diligence, paying attention to details and, more than anything, valuing people.